The Multimodal AI Advantage: Why Computer Vision Alone Is Not Enough

Computer vision has become the default entry point for industrial AI. Install cameras, train models, detect defects. The logic is straightforward, the results are often impressive, and the technology has matured to the point where deployment timelines are measured in weeks rather than years. But there is a fundamental limitation that many organizations discover only after they have invested heavily in vision-only solutions: cameras can only see what is visible.

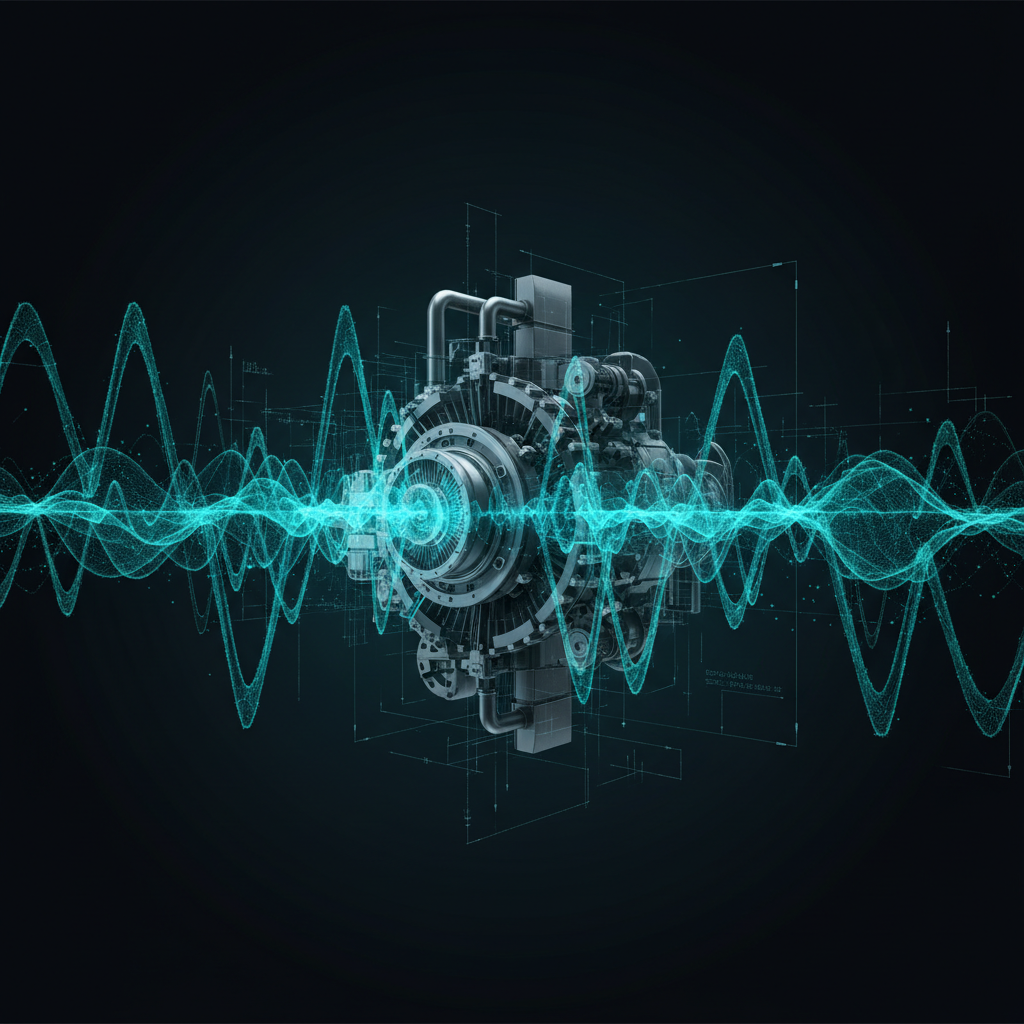

This sounds obvious, yet the implications are profound. A compressor housing that looks pristine on the outside may be developing a bearing failure that only manifests as a subtle acoustic signature. A conveyor belt that passes visual inspection may be generating vibration patterns that indicate imminent roller failure. A warehouse shelf that appears fully stocked on camera may contain products with temperature excursions detected only by IoT sensors. In each case, a single-modal AI system delivers a confident, and dangerously incomplete, assessment.

The future of industrial AI is not vision alone. It is a multimodal AI platform that fuses computer vision with audio intelligence, IoT sensor data, text and OCR processing, and workflow context to deliver the kind of comprehensive operational awareness that no single modality can achieve.

What Multimodal AI Actually Means in Practice

The term "multimodal" is used loosely in the AI industry, often to describe systems that can process different data types in isolation. True multimodal intelligence for industrial operations requires something more rigorous: the ability to correlate signals across modalities in real time and make decisions based on the combined evidence.

A genuine multimodal AI platform integrates:

- Computer Vision: Defect detection, object counting, safety compliance, process monitoring

- Audio AI: Acoustic anomaly detection, leak identification, mechanical signature classification, environmental noise monitoring

- IoT Sensor Fusion: Temperature, vibration, pressure, humidity, flow rate, and other telemetry streams

- Text and OCR: Document processing, label reading, work order extraction, compliance form analysis

- Workflow Data: Maintenance history, operator logs, shift schedules, production plans, and ERP/CMMS records

Each modality alone tells a partial story. Together, they create a 360-degree view of asset and process health that enables decisions no single data stream could support.

Computer Vision

Defect detection, object counting, safety compliance, and process monitoring from existing cameras and drones.

Audio AI

Acoustic anomaly detection, leak identification, mechanical signature classification, and environmental noise monitoring.

IoT Sensor Fusion

Temperature, vibration, pressure, humidity, flow rate, and other telemetry streams analyzed for predictive insights.

Text & OCR

Document processing, label reading, work order extraction, and compliance form analysis from industrial imagery.

The World's First Multimodal Rule Engine for Industrial Operations

Collecting multimodal data is one challenge. Acting on it intelligently is another entirely. This is where Sensfix's mmAI rule engine delivers a breakthrough: it is the world's first multimodal rule engine purpose-built for industrial operations.

Traditional rule engines operate on structured data: if temperature exceeds threshold X, trigger alert Y. The mmAI rule engine operates across modalities, enabling rules that reflect how industrial problems actually manifest:

IF visual defect detected + audio anomaly confirmed + sensor reading spike → THEN escalate priority to critical + auto-dispatch maintenance team + update CMMS work order

This cross-modal correlation is what separates a multimodal AI platform from a collection of independent AI tools that happen to coexist. The rule engine evaluates evidence from multiple sources simultaneously, weighing confidence scores across modalities to reduce false positives and catch true positives that any single modality would miss.

Cross-Modal Correlation in Action: The Compressor Health Example

Consider a real-world scenario from rail fleet maintenance. A visual inspection of a train compressor, whether performed by a human technician or a computer vision model, shows no external defects. The housing is intact, there is no visible leakage, mounting bolts are secure. A vision-only system would classify this compressor as healthy.

But an audio AI model analyzing the compressor's acoustic signature detects a subtle bearing wear pattern, a specific frequency shift that emerges weeks before catastrophic failure. The bearing is degrading inside the housing, invisible to any camera but clearly audible to a trained acoustic model.

A multimodal system correlates these findings automatically: visual inspection passed, but acoustic anomaly detected, and vibration sensor data from the compressor mounting confirms an emerging imbalance. The mmAI rule engine evaluates the combined evidence and escalates the finding to maintenance planning, weeks before the compressor would have failed in service.

This is not a theoretical scenario. It reflects the actual deployment architecture at Alstom, where Sensfix operates nine computer vision detection models alongside audio AI for compressor health monitoring across rail fleet maintenance facilities. The combination of visual and acoustic intelligence has identified degradation patterns that neither modality would have caught independently.

Platform Versus Point Solution: The Economic Argument

Beyond technical superiority, the multimodal platform approach delivers a compelling economic advantage. Consider the typical enterprise that needs industrial AI capabilities across multiple domains:

- A computer vision vendor for visual inspection

- A separate acoustic monitoring vendor for machinery health

- An IoT platform vendor for sensor data management

- An OCR vendor for document processing

- A workflow automation vendor for maintenance dispatch

- Custom integration work to connect them all

This fragmented approach means five to ten separate vendor contracts, each with its own licensing model, support structure, integration requirements, and upgrade cycle. The total cost of ownership, including procurement overhead, integration maintenance, and the organizational burden of managing multiple vendor relationships, far exceeds the sum of individual license fees.

A unified multimodal AI platform like the Sensfix SAAI Suite replaces this fragmentation with a single license, a single integration layer, and a single support relationship. New modalities and detection capabilities can be activated without new procurement cycles. Cross-modal rules can be configured without custom integration projects. The platform grows with operational needs rather than requiring a new vendor evaluation for each new use case.

42+ Proprietary Detection Models Across Six Verticals

The practical value of a multimodal platform depends on the depth of its model library. Sensfix has developed over 42 proprietary defect detection models spanning six industrial verticals: rail and transit, ports and maritime, energy and utilities, manufacturing, retail, and facilities management.

These models are not generic computer vision classifiers retrained on industrial images. They are purpose-built detection systems developed in collaboration with domain operators, trained on real-world defect data, and validated in production environments. Each model encodes specific domain knowledge: the difference between acceptable and defective weld beads on rail stock, the visual signature of corrosion under insulation on process piping, the acoustic profile of a healthy versus degraded compressor bearing.

This breadth of coverage means that enterprises deploying the Sensfix platform gain immediate access to detection capabilities that would take years to develop internally, and can deploy them in multimodal configurations from day one.

Why the Industry Is Moving Toward Multimodal

The limitations of single-modal AI are not a future concern. They are a present reality that organizations encounter as soon as they move past initial pilot deployments. The first camera-based inspection project succeeds, generating enthusiasm. The second and third projects succeed as well. But eventually, the organization encounters a detection challenge that cameras alone cannot solve, and discovers that their vision-only vendor has no path forward.

A multimodal AI platform anticipates this trajectory. It provides the computer vision capabilities that most organizations need first, while ensuring that audio AI, sensor fusion, OCR, and workflow intelligence are available when operational reality demands them. The architecture is ready before the need becomes urgent.

For industrial operations leaders evaluating AI investments, the strategic question is clear: invest in a platform that can evolve across modalities, or accept the limitations, and eventual replacement costs, of a vision-only point solution. The organizations that choose multimodal today will have a compounding advantage as their cross-modal intelligence deepens over time.

Ready to See These Results?

Book a personalized demo and see how the SAAI Suite delivers measurable outcomes for your operations.